The increase in domains such as E-learning, Ubiquitous Computing, Ambient Intelligent and the Internet of Things, exposes users to demanding interactive environments on a daily basis, a fact that increases the need for good user experience (UX). Emotional aspects of UX have gained increased interest recently. The main goal of a user emotional experience evaluation is to identify system flaws that trigger negative emotions such as stress.

Stress is a condition in which a person’s bodily functions change rapidly from calmness to high arousal. This transition is accompanied by biochemical, physiological and behavioral changes. On the one hand, the majority of people interprets stress as a negative experience or emotion. On the other hand, there is a part of the population which argues that stress may give a beneficial boost under specific circumstances, for example when meeting an important deadline for a report submission, or solving an exercise while taking part in exams. However, frequent involvement in stressful conditions is a precursor of chronic stress, which can badly affect health.

Emotions and User Experience (UX)

In 1993 at Apple Computer, Inc. Donald Norman introduced the term User Experience (UX) as a broader concept that incorporates usability evaluation. UX extends the existing evaluation methods by taking into consideration parameters such as how the users feel during their interaction with a system. Emotions such as stress may also affect users’ performance, and its presence in interactive computer environments is probably interpreted as a UX issue. Thus, assessing stress is particularly important in this context.

More recently, emotional states analysis constitutes a popular evaluation method in Human-Computer Interaction (HCI), and especially in usability evaluation studies. The relation between emotions and physiological signals has shifted the research community’s attention beyond traditional UX evaluation methods based on self-reported data, such as those collected through questionnaires. Additionally, physiological signals collection parameters, such as the low cost and small size of recording sensors, along with a variety of integrated communication technologies like Bluetooth and Wi-Fi, have further promoted their use. However, a significant drawback of physiological signals is that they are prone to noise, including humidity levels and temperature.

Available approaches that use physiological signals in order to conduct emotional state analysis are suffering from an important limitation. Specifically, the existing physiological signals datasets which are used for emotion analysis rely on stimuli that induce intense emotions, such as watching a scary movie, listening to a favorite song, and gaming. However, identification of emotions is of more interest in subtle HCI tasks and as such this remains an open research topic.

The PhysiOBS

PhysiOBS is a Windows application which aims to support researchers and practitioners in the demanding task of stress detection. It is a tool that supports post-study analysis, and thus requires, as a prerequisite, that all users’ data sources are appropriately synchronized.

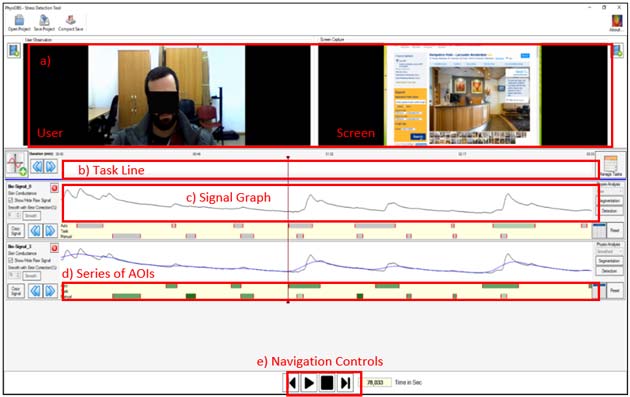

The main interface of the tool is shown below. PhysiOBS needs at least one video recording made by a user. If both user’s video and screen recording are available, the evaluator can watch them at the same time. Then, the evaluator can define tasks and assign them to specific time periods in the ‘Task Line’ area of the tool. Extra information such as duration, task result and general evaluator’s comments can also be added.

Figure 1 PhysiOBS: a tool that combines physiological measurements, observation and self-reported data. (a) concurrent view of user’s video and screen recordings, (b) task/subtasks view, (c) physiological signal(s) view, (d) AOIs, (e) navigation controls, synchronized across all available views. (Author Provided)

More importantly, user’s physiological signals can also be loaded to PhysiOBS, in a ‘Signal Graph’. The evaluator can use the embedded signal smoothing mechanism without having a signal analysis background. Additionally, the tool provides an auto-segmentation mechanism which may be used to produce auto-suggested areas of interest (AOIs) to the evaluator. The result of this auto-segmentation process is represented as a series of AOIs periods, with grey color denoting the identified AOIs. Evaluators can also manually add AOIs.

Next, the evaluator may feed AOIs in the integrated stress prediction mechanism which employs four trained classifiers. Prediction result is calculated as the mean of these four Classifiers. For example if C1=1, C2=1, C3=1, C4=0; 1=true, 0=false; Prediction Result: 75% stress. Varying shades of green depict the result’s intensity. The prediction mechanism was developed from 182 skin conductance signals that were recorded from 31 participants involved in five stressful interaction tasks and a baseline condition of no stress. These tasks were selected based on face-to-face interviews with 15 typical computer users who were asked to list stressful HCI tasks from their personal experience. The best recognition accuracy was achieved by the C-SVM classifier (Mean = 98.8 %, SD = 0.6 %). This very high accuracy demonstrates the potential of using physiological signals for stress recognition in the context of subtle HCI tasks.

In addition, all AOIs can be optionally enriched with user’s self-reported data in the form of comments. Study findings support that there is some alignment of self-reported stress and measured skin conductance while using interactive applications. The evaluators’ sense-making of the available data is supported by navigation controls, which are synchronized across all available views. Another important functionality provided by the tool is the save or load project option. The evaluator can save each participant’s evaluation in order to edit it later.

In the future the aim is to validate these findings by performing additional studies following the same methodology but using more physiological signals. In this way, the emotionally-labelled dataset for stress recognition in subtle HCI tasks will be extended, and made freely-available to the research community. Finally, a real time stress detection mechanism is also under investigation.

Top image: Under stress. (CC BY 2.0)

By Michalis Xenos, Alexandros Liapis, Christos Katsanos

Michalis Xenos (BSc 1991, PhD 1996) is a Professor at the Department of Computer Engineering and Informatics at the University of Patras. He was the director of the graduate (2013-2016) and the postgraduate (2009-2012) Computer Science courses at the Hellenic Open University. He was member of the European Steering Committee for the OpenUpEdu Group for the European MOOCs and founder and director of the Software Quality Research Group. He is now leading 4 EU funded research projects and he has lead and participated in over 50 research and development projects in the areas of software engineering and educational technologies. His current research interests include Software Quality, Human Computer Interaction, Human Robot Interaction and Educational Technologies. He has authored or co-authored 8 books and over 150 papers in international journals and conferences.

Michalis Xenos (BSc 1991, PhD 1996) is a Professor at the Department of Computer Engineering and Informatics at the University of Patras. He was the director of the graduate (2013-2016) and the postgraduate (2009-2012) Computer Science courses at the Hellenic Open University. He was member of the European Steering Committee for the OpenUpEdu Group for the European MOOCs and founder and director of the Software Quality Research Group. He is now leading 4 EU funded research projects and he has lead and participated in over 50 research and development projects in the areas of software engineering and educational technologies. His current research interests include Software Quality, Human Computer Interaction, Human Robot Interaction and Educational Technologies. He has authored or co-authored 8 books and over 150 papers in international journals and conferences.

---

Alexandros Liapis is a PhD candidate in School of Sciences and Technology of Hellenic Open University. His research interests are focused on Human-Computer Interaction (HCI), user interface evaluation, physiobiological data analysis and emotion recognition in HCI. He is a certified java programmer (EUCIP Certification) and he has developed many software applications in private sector. Since December 2011 his is working in Internal Assessment and Training Unit and as a research member of Software Quality group of the Hellenic Open University.

Alexandros Liapis is a PhD candidate in School of Sciences and Technology of Hellenic Open University. His research interests are focused on Human-Computer Interaction (HCI), user interface evaluation, physiobiological data analysis and emotion recognition in HCI. He is a certified java programmer (EUCIP Certification) and he has developed many software applications in private sector. Since December 2011 his is working in Internal Assessment and Training Unit and as a research member of Software Quality group of the Hellenic Open University.

---

Dr. Christos Katsanos holds a PhD in Human Computer Interaction and a Diploma in Electrical and Computer engineering from the University of Patras. Today, he is an Adjunct Consulting Professor at the Open University of Cyprus, an adjunct Assistant Professor at the Technological Educational Institute of Western Greece, an Adjunct Consulting Professor (theses supervisor) at the Hellenic Open University, and a senior software and usability engineer at the Internal Assessment and Training Unit of the Hellenic Open University. His research interests include human-computer interaction, human-robot interaction, Web science, Web accessibility, and educational technology.

Dr. Christos Katsanos holds a PhD in Human Computer Interaction and a Diploma in Electrical and Computer engineering from the University of Patras. Today, he is an Adjunct Consulting Professor at the Open University of Cyprus, an adjunct Assistant Professor at the Technological Educational Institute of Western Greece, an Adjunct Consulting Professor (theses supervisor) at the Hellenic Open University, and a senior software and usability engineer at the Internal Assessment and Training Unit of the Hellenic Open University. His research interests include human-computer interaction, human-robot interaction, Web science, Web accessibility, and educational technology.

---

References

Anderson, N. B. (1998). Levels of analysis in health science. A framework for integrating sociobehavioral and biomedical research. Annals of the New York Academy of Sciences, 840, 563–576.

Diamond, D. M., Campbell, A. M., Park, C. R., Halonen, J., & Zoladz, P. R. (2007). The temporal dynamics model of emotional memory processing: a synthesis on the neurobiological basis of stress-induced amnesia, flashbulb and traumatic memories, and the Yerkes-Dodson law. Neural Plasticity, 2007, 60803. https://doi.org/10.1155/2007/60803

Hernandez, J., Paredes, P., Roseway, A., & Czerwinski, M. (2014). Under Pressure: Sensing Stress of Computer Users. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems (pp. 51–60). New York, NY, USA: ACM. https://doi.org/10.1145/2556288.2557165

Liapis, A., Karousos, N., Katsanos, C., & Xenos, M. (2014). Evaluating User’s Emotional Experience in HCI: The PhysiOBS Approach. In M. Kurosu (Ed.), Human-Computer Interaction. Advanced Interaction Modalities and Techniques (pp. 758–767). Springer International Publishing. Retrieved from http://link.springer.com/chapter/10.1007/978-3-319-07230-2_72

Liapis, A., Katsanos, C., Sotiropoulos, D. G., Karousos, N., & Xenos, M. (2017). Stress in interactive applications: analysis of the valence-arousal space based on physiological signals and self-reported data. Multimedia Tools and Applications, 76(4), 5051–5071. https://doi.org/10.1007/s11042-016-3637-2

Liapis, A., Katsanos, C., Sotiropoulos, D., Xenos, M., & Karousos, N. (2015). Recognizing Emotions in Human Computer Interaction: Studying Stress Using Skin Conductance. In J. Abascal, S. Barbosa, M. Fetter, T. Gross, P. Palanque, & M. Winckler (Eds.), Human-Computer Interaction – INTERACT 2015 (pp. 255–262). Springer International Publishing. https://doi.org/10.1007/978-3-319-22701-6_18

No comment