Early efforts to build artificial silicon cochlea have largely been driven by their potential applications in hearing aids, cochlear implants and other portable devices that demand real-time, low-power signal processing for audio events recognition, including speech. With the recent advances in robotics, the topic has gained even more interest, as equipping robots with human-like sensory capabilities provides the ground for the future evolvement of robotics.

Trying to match the biological cochlea’s sound sensitivity, frequency selectivity and dynamic range is a challenge. Previous attempts employed spiking cochlea models to describe the analog processing and spike generation process. Nearly all silicon cochlea are built around second-order low-pass or band-pass filters. Reconstructing the audio input from the artificial cochlea spikes is a challenging process particularly for spikes from mixed signals (analog/digital) due to model non-linearity and variance caused by random transistor mismatch.

In response to the challenge, the researchers at the Institute of Neuroinformatics, University of Zurich, are working on the so called neuromorphic cognitive systems (grounded to neuromorphic engineering), which are neuromorphic architectures that can learn and reason about the actions to take, in response to the combinations of external stimuli, internal states and behavioral objectives. The recently published novel, neuromorphic circuit produces a continuous stream of analog random samples. It does this by encoding the temporal difference between the onset times of two subsequent voltage jumps, which mimic action potentials of biological neurons. Basically, it is an event-based auditory neuromorphic system, which works selectively and so is resistant to the presence of distracters and noise.

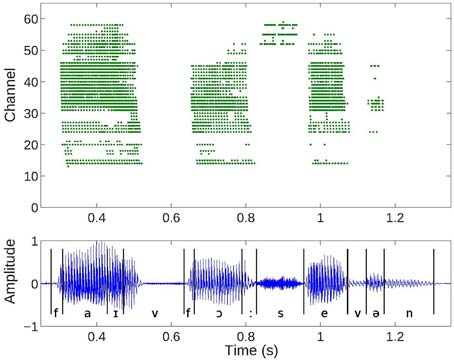

Cochlea spike responses to a digit sequence “5, 4, 7” (top) and the corresponding audio waveform (bottom). Events or spiral ganglion cell outputs from the 64 channels of one ear of the binaural AEREAR2 chip. Low channels correspond to low frequencies and high channels to high frequencies.

The new version of the neuromorphic auditory system embeds a subset of properties of the biological cochlea ranging from the spatial frequency-selective filtering of the basilar membrane, the rectification property of the inner hair cells, the local automatic gain control mechanism of the outer hair cells, and the generation of spikes by the spiral ganglion cells. It consists of two silicon ear cochleae, each them being ultra-low in power consumption (only 0.5mW, which is three orders of magnitude less than previous versions) and integrating 64 frequency band channels (filters) covering a wide range from low pitches to high. Similarly, to the human auditory system, the two cochleae can be moved independently of each other, allowing for sound origin location from the inter-aural time difference between sound waves reaching each of the cochlea.

Although the sensing technology, capable of sensitive “hearing” in the human frequency range, is in place, the event recognition from sound data is still under development. It is like a new born child, who has the full capacity of hearing sounds, but it is not yet able to associate them to environmental events (e.g. barking dog, speaking human, car engine, rain or ringing phone). The researchers at the Institute of Neuroinformatics intend to integrate the sensitive and low-power silicon ear cochleae with deep learning algorithms, trying to achieve artificial auditory perception better than ever before.

Top image by Katherine Bourzac (spectrum.ieee.org)

No comment